Tom Kelliher, CS 325

Mar. 30, 2011

Read 4.1-4.3.

TCP Reliability.

Network layer introduction.

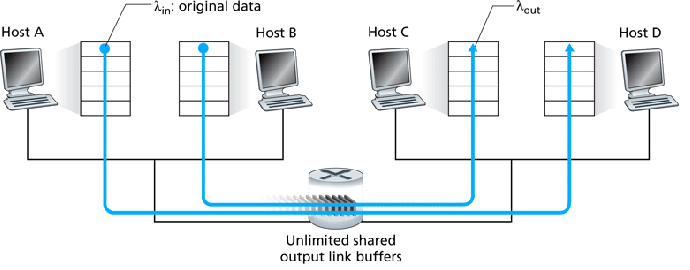

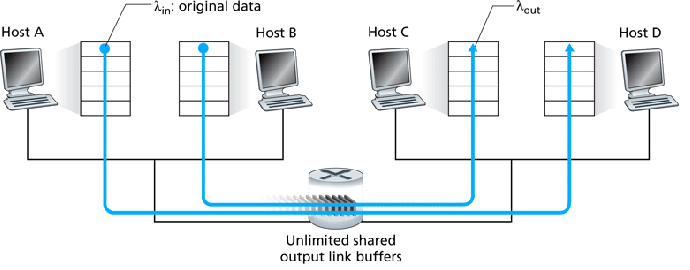

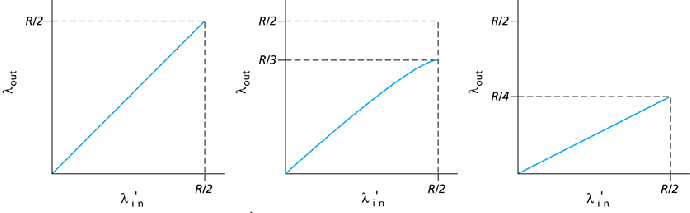

Assume the senders equally share the available bandwidth.

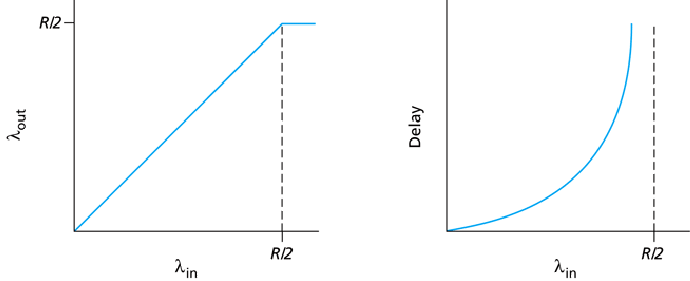

Due to retransmits,

![]() .

.

The graph on the left assumes the sender is omniscient and knows when the router has free buffers and only transmits segments then, avoiding dropped segments. -- unrealistic.

The middle graph shows what could happen if the sender retransmits only segments known to be lost -- again, unrealistic. Here, we assume 17% of segments are retransmits.

The graph on the right shows this realistic scenario, assuming each segment is retransmitted once.

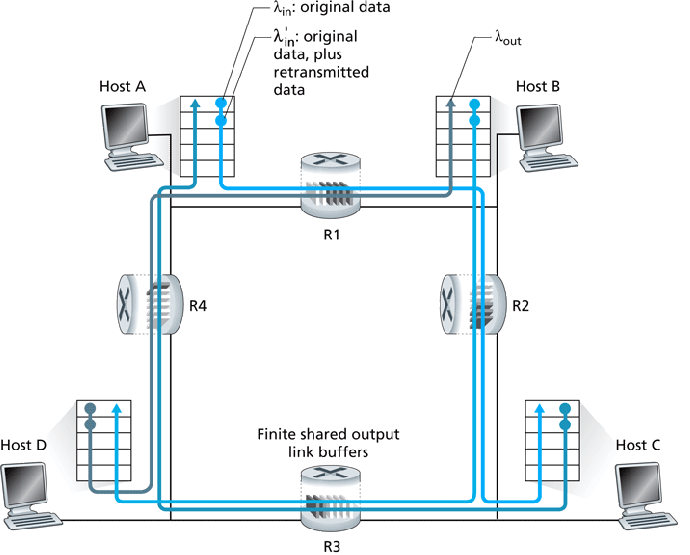

Worst case, B-D traffic could completely lock-out A-C traffic beyond the

point at which

![]() saturates the routers' transmit

capacity:

saturates the routers' transmit

capacity:

![\includegraphics[width=3.5in]{Figures/fig03_48.eps}](mar30img13.png)

![\includegraphics[width=4in]{Figures/fig03_49.eps}](mar30img14.png)

Two approaches:

Third idea for TCP: increased RTTs mean congestion is beginning to become a problem.

Receiver responsible for getting the indication back to the sender.

We therefore have

![]() .

.

So, RcvWindow, controlled by receiver, can be used to throttle sender rate.

We then have:

TCP congestion control algorithm:

Not as bad as a timeout.

Increase rate is controlled by RTT -- a lower RTT results in faster CongWindow increase rate.

Often implemented by increasing CongWindow by

![]() for each new ACK.

for each new ACK.

![\includegraphics[width=5in]{Figures/fig03_51.eps}](mar30img21.png)

Exponential increase in CongWindow during SS phase:

![\includegraphics[width=4in]{Figures/fig03_52.eps}](mar30img22.png)

![\includegraphics[width=4in]{Figures/fig03_53.eps}](mar30img23.png)

TCP Reno = current algorithm. Is decrease from timeout or fast retransmit?

| State | Event | Sender Action | Comment |

| Slow Start (SS) | New ACK received | CongWin = CongWin + MSS. if

(CongWin |

CongWin doubles every RTT. |

| Congestion Avoidance (CA) | New ACK received | CongWin = CongWin + MSS(MSS/CongWin) | CongWin increases by 1 MSS every RTT. |

| SS or CA | Triple Dup ACK | Threshold = CongWin/2. CongWin = Threshold. set state to CA. | Fast recover; multiplicative decrease. |

| SS or CA | Timeout | Threshold = CongWin/2. CongWin = 1 MSS. Set state to SS. | |

| SS or CA | Duplicate ACK received | Increment duplicate ACK count for segment |

![\includegraphics[width=4in]{Figures/fig03_54.eps}](mar30img25.png)

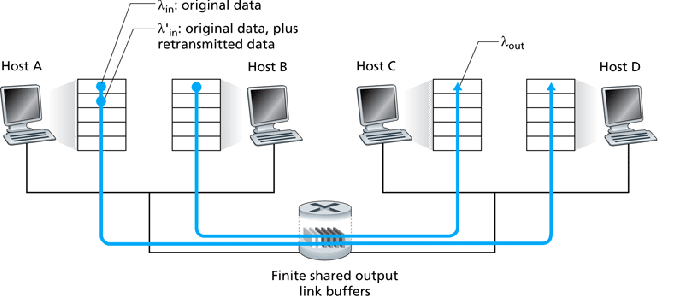

Suppose, initially, connection 1 has a higher throughput (CongWindow) than connection 2:

![\includegraphics[width=4in]{Figures/fig03_55.eps}](mar30img26.png)

This shows what happens during 3DupACK events -- multiplicative decrease. Eventually, we converge to equal throughput.

On timeout, both connections will end up with a CongWindow of 1 MSS.